The Revenge of the Data Scientist

Agentic systems need more than prompts and model calls. They need a surrounding machinery that helps the system observe itself and improve, including logs, metrics, traces, tests, and specifications.

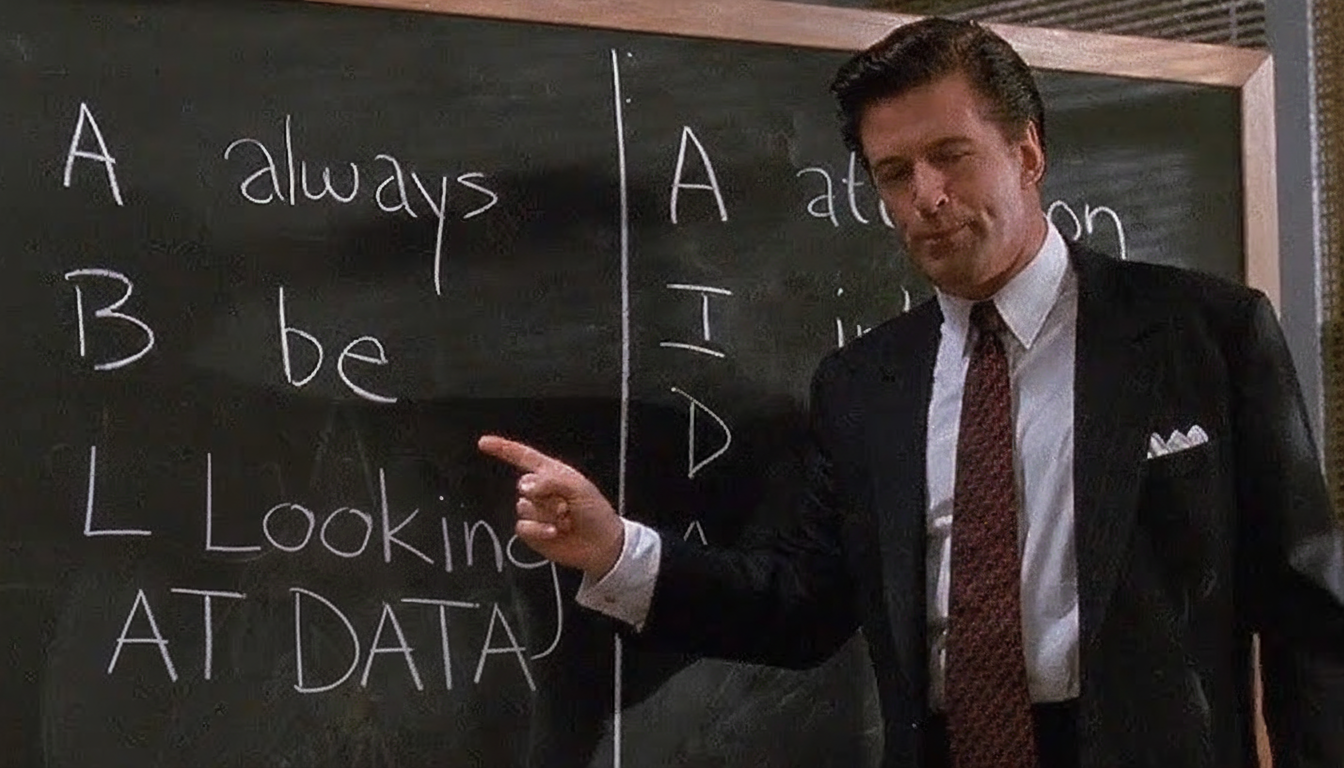

This article by Hamel Husain [1] gives a good rundown of the key considerations:

Generic quality scores like helpfulness or hallucination are often not very useful because they do not explain what actually failed. Define narrow metrics tied to specific failure modes.

Inspection has to be easy and frequent so hands-on review can happen regularly and reveal patterns, not just numbers. This only works when tools are built to support that kind of inspection.

LLM judges are part of the system and must be validated. They should be treated like classifiers: checked against human labels, tuned carefully, and evaluated with metrics like precision and recall rather than trusted at face value.

Good experiments start from real behavior, not abstract test generation. Start from real logs or traces, then create synthetic examples grounded in those actual patterns and edge cases.

Labeling is part of product thinking, not just annotation. Keeping domain experts close to labeling helps teams refine what they truly care about, because criteria often become clearer only after reviewing real outputs.

Too much automation can hide the signal needed for improvement. LLMs can help with boilerplate and plumbing, but they cannot replace the human work of looking directly at failures and deciding what matters.

References

[1] Husain, Hamel. “The Revenge of the Data Scientist.” Hamel’s Blog. https://hamel.dev/blog/posts/revenge/

Originally posted on LinkedIn.