Marcus vs. Brundage: A Concrete AGI Bet

Gary Marcus, a cognitive scientist and longtime critic of scaling-centric AI, and Miles Brundage, an AI policy researcher and former OpenAI policy lead, agreed in 2024 to make the following bet on AI [1]:

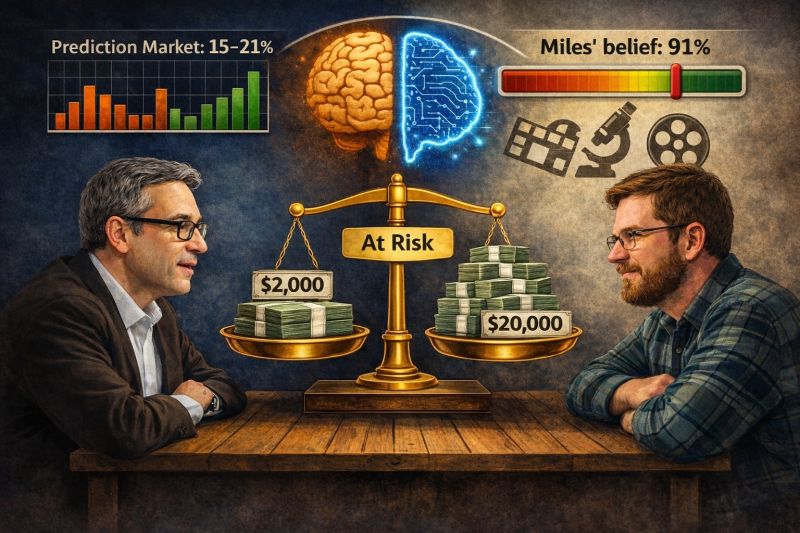

“If AI can complete at least eight of the milestones with minimal human involvement by the end of 2027, Marcus pays Brundage $2,000. If not, Brundage pays Marcus $20,000.”

The tasks are ambitious. AI systems must:

- Watch and analyze a new film.

- Read and interpret a new novel.

- Write a NYT-caliber obituary without hallucinations.

- Master most new video games.

- Draft a professional legal brief without fake citations.

- Generate 10,000+ lines of correct production code.

- Produce a Pulitzer-caliber book.

- Write an Oscar-caliber screenplay.

- Make a Nobel-caliber scientific discovery.

- Translate a published mathematical proof into formal, machine-verifiable form.

My first reaction: this doesn’t look like average human general intelligence. Almost no human could do all of these tasks! Clearing eight of ten feels less like “human-level AGI” and more like cross-domain elite expertise — something close to a superhuman polymath.

But the wager does not require a single unified AI system to accomplish all tasks. Multiple AI systems can be used across domains, as long as each task is completed with minimal human involvement. So the benchmark is about demonstrating AI capability collectively, not necessarily one monolithic AGI.

The asymmetric odds are striking. Brundage risks $20,000 to win $2,000. For that to be positive expected value, he would need to believe the probability of success exceeds roughly 91 percent. Here is how prediction markets see it today:

That gap between the private conviction and the crowd forecast is especially interesting. But ultimately, the bet surfaces a deeper question: What do we actually mean by AGI — average human breadth, elite cross-domain capability, or highly autonomous systems operating across fields?

Whatever happens by 2027, I appreciate that this wager forces the conversation into concrete, falsifiable milestones instead of vague timeline claims.

References

[1] Marcus, Gary. “Where will AI be at the end of 2027? A bet.” Substack, 2024. https://garymarcus.substack.com/p/where-will-ai-be-at-the-end-of-2027

[2] Manifold Markets. “Will Miles Brundage win his bet with Gary Marcus?” https://manifold.markets/dreev/will-miles-brundage-win-his-bet-wit

[3] Metaculus. “2027 AI bet: Winner between Gary Marcus and Miles Brundage?” https://www.metaculus.com/questions/31246/2027-ai-bet-winner-between-gary-marcus-and-miles-brundage/

Originally posted on LinkedIn.