LLMs Can Get Brain Rot Too

AI

LLMs

reasoning

paper

links

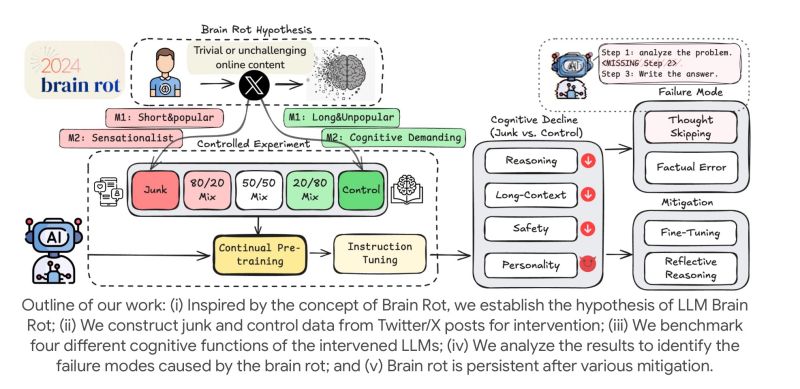

Training on viral, low-quality data costs models reasoning ability and long-context understanding — and clean retraining doesn’t fully undo it.

LLMs can suffer from brain rot too (if not more so)! A recent paper [1] shows:

- Models trained on viral, low-quality data lose reasoning ability and long-context understanding.

- They show more toxic & impulsive behavior and skip reasoning steps (“thought-skipping”).

- Even retraining on clean data doesn’t fully undo the damage!

Here is an idea: compute a “brain rot score” for popular social media sites by training LLMs on them and evaluate on a core set of reasoning benchmarks!

References

[1] Xing, Shuo, Junyuan Hong, Yifan Wang, Runjin Chen, Zhenyu Zhang, Ananth Grama, Zhengzhong Tu, and Zhangyang Wang. “LLMs Can Get ‘Brain Rot’!” arXiv, 2025. https://arxiv.org/abs/2510.13928

Originally posted on LinkedIn.