Journey into Coding with AI [2/4]: Shifting Gears

(Part 1: Running Back to Code)

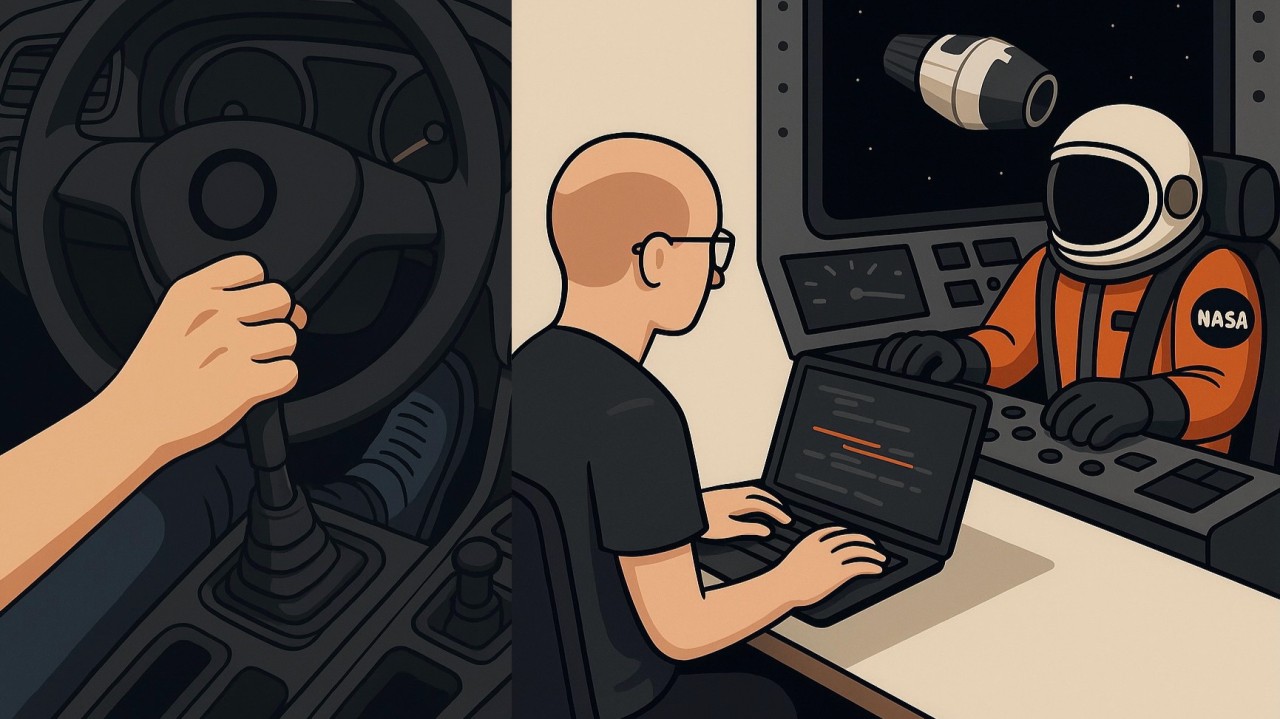

Remember driving a stick-shift car? Your hand clenched the gearstick while your foot danced on the clutch, trying to nail that gear change. Looking back it’s clear that the driver was put to doing a machine’s job, but transitioning from stick to automatic is a paradigm shift that has elicited (still to this day) a lot of emotional responses. However, it’s definitely not the first time humanity was debating with itself how much control they’d like to cede to machines.

Lessons from Apollo

In Chapter 7 of Neil Lawrence’s Atomic Human, he captures the tension between human and autonomous control. During the Apollo missions, the guidance computer steered the spacecraft most of the way to the moon, and the thrusters weren’t directly linked to the pilot’s inputs. This didn’t sit well with the astronauts, all of whom were experienced, well-trained Navy test pilots. But it was necessary: the journey to the moon was long and dangerous, and the calculations and course corrections were tedious yet vital. The final design allowed humans to intervene — a decision that later proved correct and life-saving.

Quality-of-Life Wins

Coming back on earth, we’re facing essentially the same question in software engineering: how much control are we willing to cede, and what benefits come with it? After just a month of experimenting, I can already see the value of automating certain programming tasks (as I described in Part 1). But there are also countless small quality-of-life improvements when working with coding AI:

- You can interrogate code — even ask why a particular line exists.

- You don’t need to remember exact names; a misspelled function or a vague description often still lands you in the right spot.

- And those complicated, commonsense-defying git dances? Just state your intention and let coding AI figure out the right sequence (with your approval, of course).

When the Pilot Has to Override

I think of these as stick-shift–level automation. But just as Neil Armstrong once overrode the autonomous system during Gemini VIII mission, firing a backup thruster to stop the spacecraft from spinning out of control during docking, and in so doing saving everyone on board, we, the human programmers, must stay vigilant for the kinds of AI errors I’ve encountered:

- Coding AI generated model training code that led to a tensor shape mismatch.

- Remember the gradient flow disruption bug I mentioned in Part 1? That was introduced by another coding AI during a refactor.

- Coding AI split a training/validation dataset without keeping class balance consistent.

- Coding AI proudly reported “all tests passed” even while the debug log showed failures.

- Coding AI “passed” tests by cheating — rewriting the tests themselves.

- Coding AI tried to make a Python constant private by adding _ to one that already had a leading underscore.

But make no mistake – we’re getting there. The coding AI we use today will be the worst version we’ll ever see, and it’s already good enough to take us from stick-shift driving to automatic. As the technology matures, we should expect higher and higher levels of automation. But what role remains for humans in a task, among others, once considered the pinnacle of human intelligence?

I’ll close this installment with Neil Lawrence’s argument in Atomic Human: as AI replicates more human capabilities, it keeps stripping away what’s machine-reproducible in us, until we’re left with the indivisible core of humanity – our vulnerabilities, our embodiment, and our unique judgment. His message is clear: we must understand and harness that essence, not cede it. Machines, after all, are bicycles for the mind.

(… to be continued in Part 3)

Continue the series: ← Part 1: Running Back to Code · Part 3: Decision-Bound Programming →

Originally posted on LinkedIn.