Eliciting In-context Retrieval and Reasoning for Long-context LLMs (Preprint)

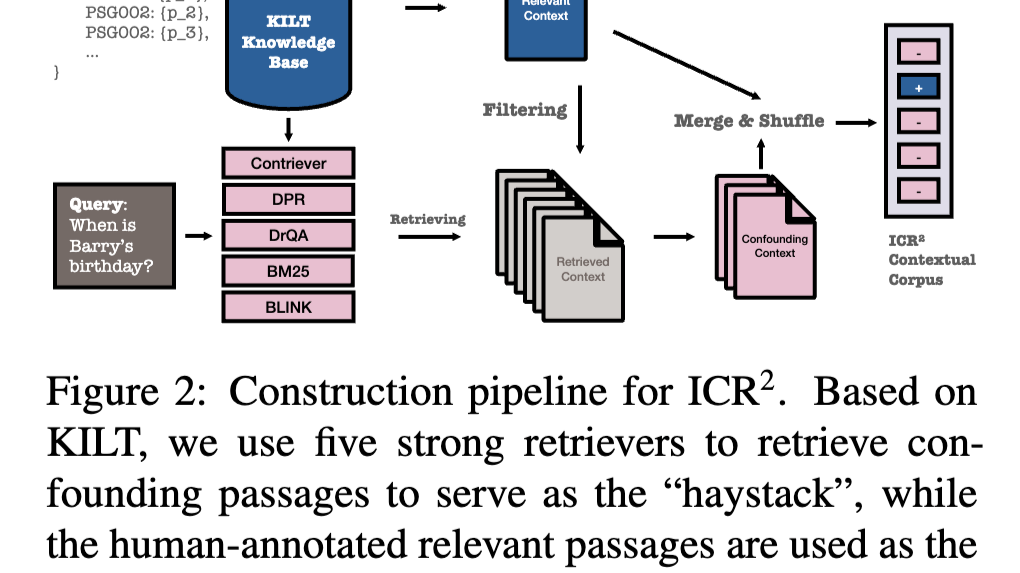

What if LLMs had context windows so large that an entire knowledge base could fit into a single prompt? This would revolutionize Retrieval-Augmented Generation (RAG) applications by enabling retrieval, re-ranking, reasoning, and generation all in one step. With a Long-Context Language Model (LCLM), we could simplify RAG architecture by leveraging the model’s capability for In-Context Retrieval and Reasoning (ICR²). But are current LCLMs up to the task? If not, how can we improve their performance?

Read on about our fresh preprint [1]!

Update (June 2025): the paper has been accepted to ACL 2025 Findings.

References

[1] Qiu, Yifu, Varun Embar, Yizhe Zhang, Navdeep Jaitly, Shay Cohen, and Benjamin Han. “Eliciting In-context Retrieval and Reasoning for Long-context Large Language Models.” 2025. https://arxiv.org/abs/2501.08248

[2] Lee, Jinhyuk, Anthony C., Zhuyun Dai, Dheeru Dua, Devendra Singh Sachan, Michael Boratko, et al. “Can Long-Context Language Models Subsume Retrieval, RAG, SQL, and More?” 2024. https://arxiv.org/abs/2406.13121

Originally posted on LinkedIn.