TWEAK at NAACL 2024: Decoding Without Hallucinations

See how we TWEAK’ed the decoding process to reduce hallucination when verbalizing knowledge graphs, at NAACL 2024 in Mexico City!

For the runners in the conference participants, there are already two tour running events scheduled (10K and 5K). Check Whova!

Poster (PDF): tweak-poster.pdf

This is the conference-week follow-up to the original acceptance announcement (April 2024), which also covered our LAGRANGE paper at LREC-COLING.

References

[1] Qiu, Yifu, Varun Embar, Shay Cohen, and Benjamin Han. “Think While You Write: Hypothesis Verification Promotes Faithful Knowledge-to-Text Generation.” 2023. Apple Machine Learning Research: https://machinelearning.apple.com/research/write-hypothesis

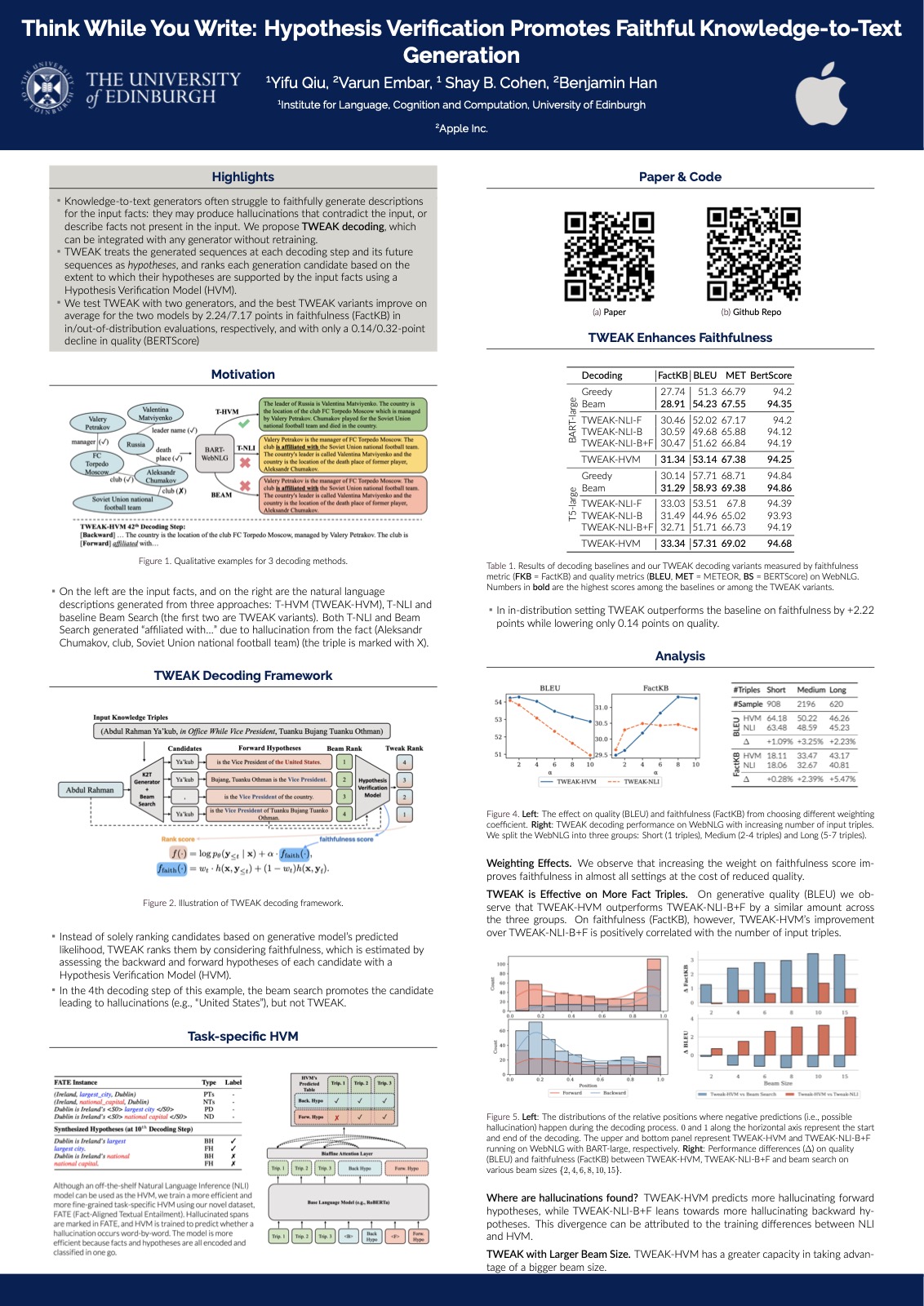

Abstract: Neural knowledge-to-text generation models often struggle to faithfully generate descriptions for the input facts: they may produce hallucinations that contradict the given facts, or describe facts not present in the input. To reduce hallucinations, we propose a novel decoding method, TWEAK (Think While Effectively Articulating Knowledge). TWEAK treats the generated sequences at each decoding step and its future sequences as hypotheses, and ranks each generation candidate based on how well their corresponding hypotheses support the input facts using a Hypothesis Verification Model (HVM). We first demonstrate the effectiveness of TWEAK by using a Natural Language Inference (NLI) model as the HVM and report improved faithfulness with minimal impact on the quality. We then replace the NLI model with our task-specific HVM trained with a first-of-a-kind dataset, FATE (Fact-Aligned Textual Entailment), which pairs input facts with their faithful and hallucinated descriptions with the hallucinated spans marked. The new HVM improves the faithfulness and the quality further and runs faster. Overall the best TWEAK variants improve on average 2.22/7.17 points on faithfulness measured by FactKB over WebNLG and TekGen/GenWiki, respectively, with only 0.14/0.32 points degradation on quality measured by BERTScore over the same datasets. Since TWEAK is a decoding-only approach, it can be integrated with any neural generative model without retraining.

Originally posted on LinkedIn.