Two K2T Papers Accepted: TWEAK at NAACL, LAGRANGE at LREC-COLING (2024)

Check out our two most recently published papers on knowledge-to-text (K2T) generation task!

In the first paper, to be published in NAACL 2024 Findings [1], we “tweaked” the decoding process to make a generator model “think while writing” in order to reduce hallucination (see screenshot 1 for Abstract):

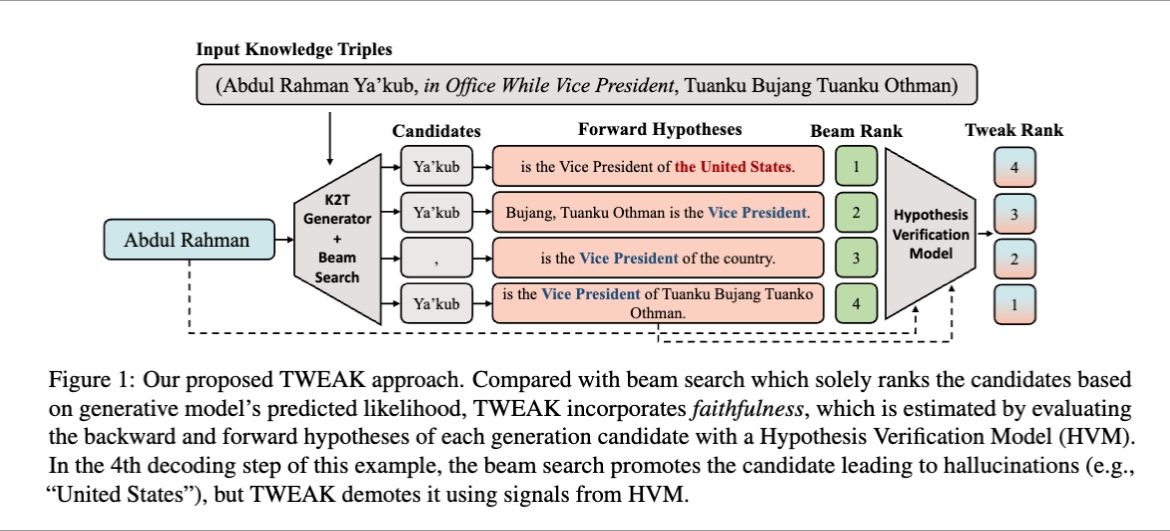

- The core idea is to re-rank generation candidates by performing hypothesis verification during decoding (screenshot 2). The approach is therefore easy to be integrated with a generator model without re-training.

- The hypotheses consist of backward/forward generation, and good hypotheses should be supported by the input facts.

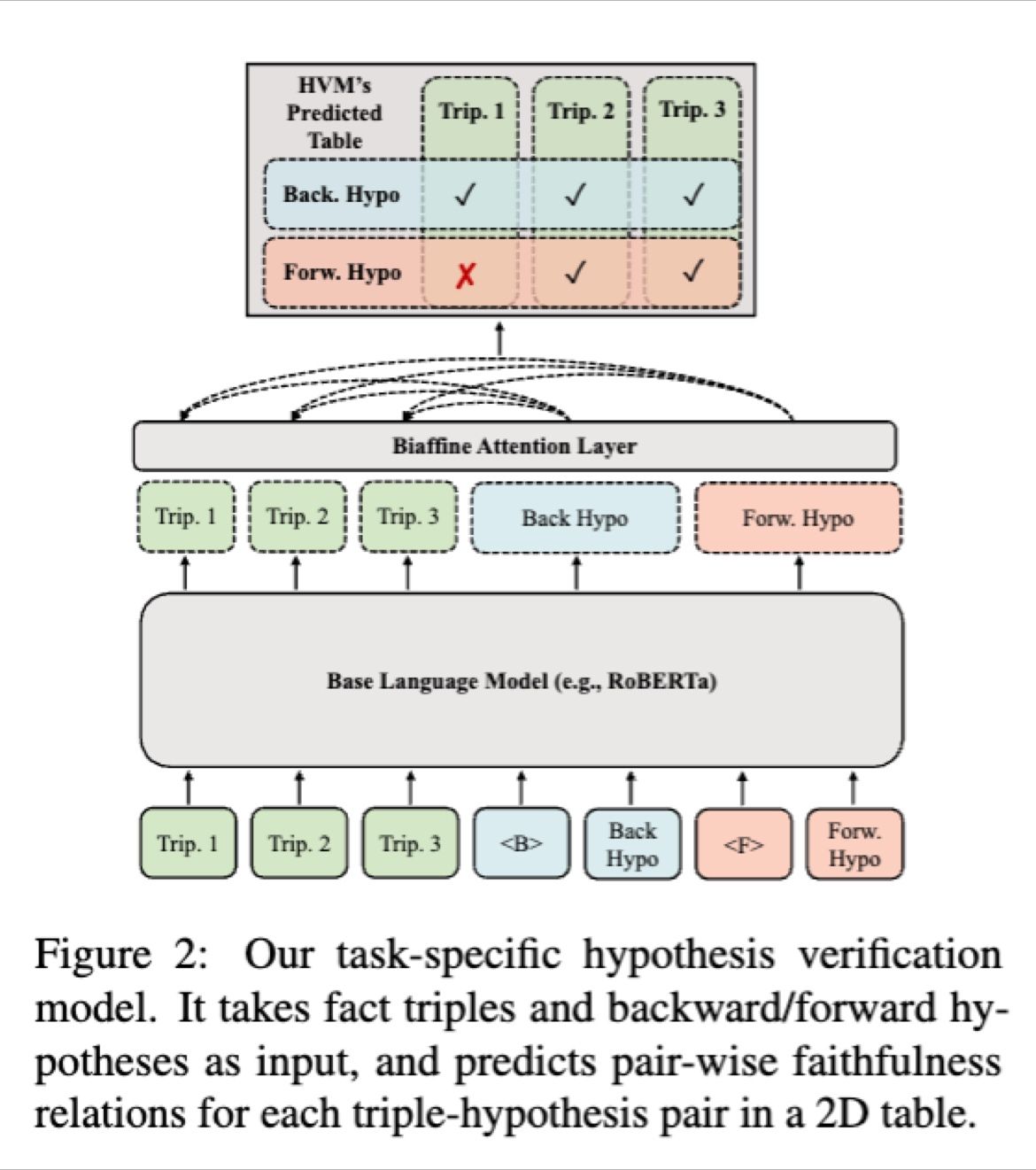

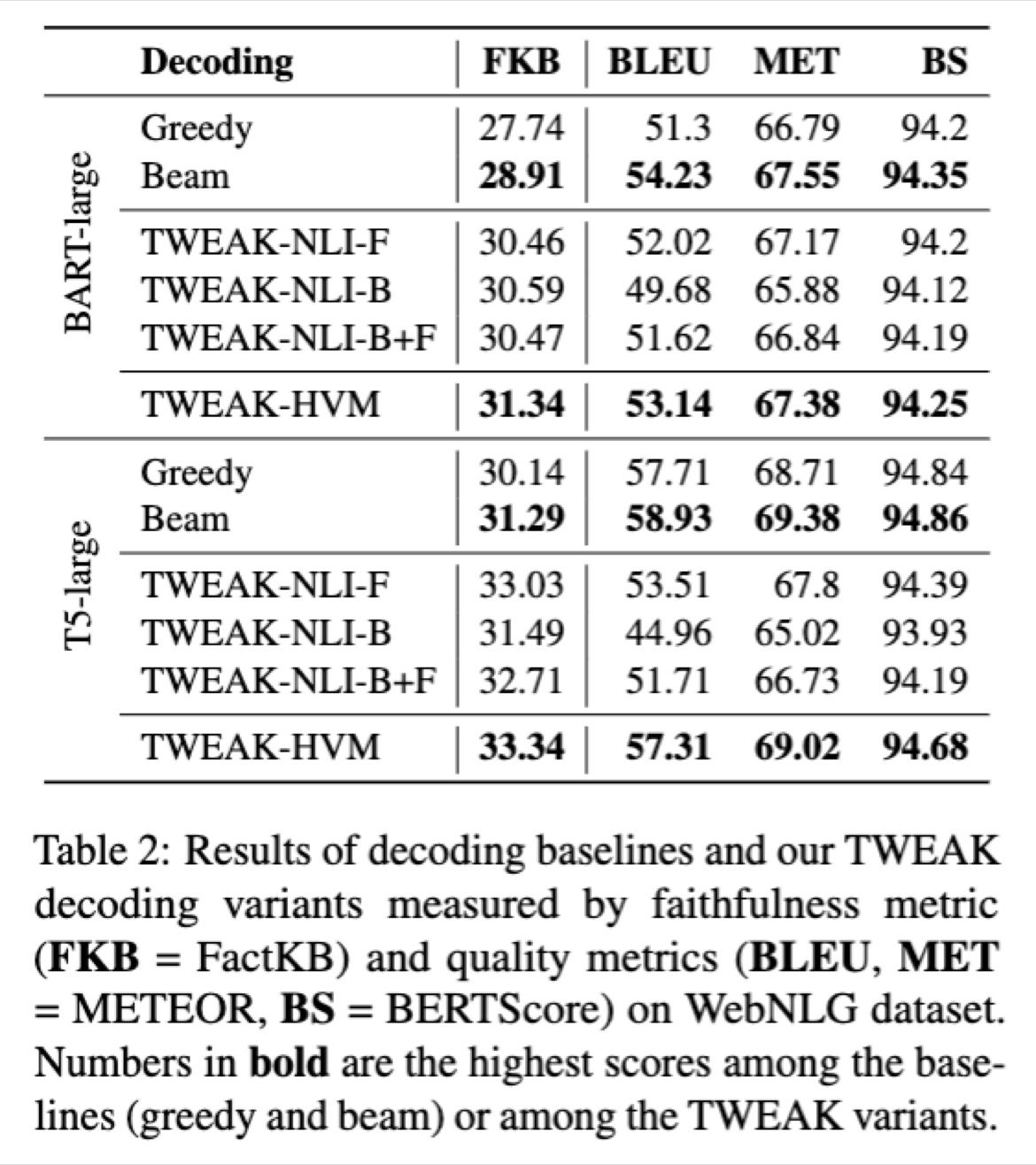

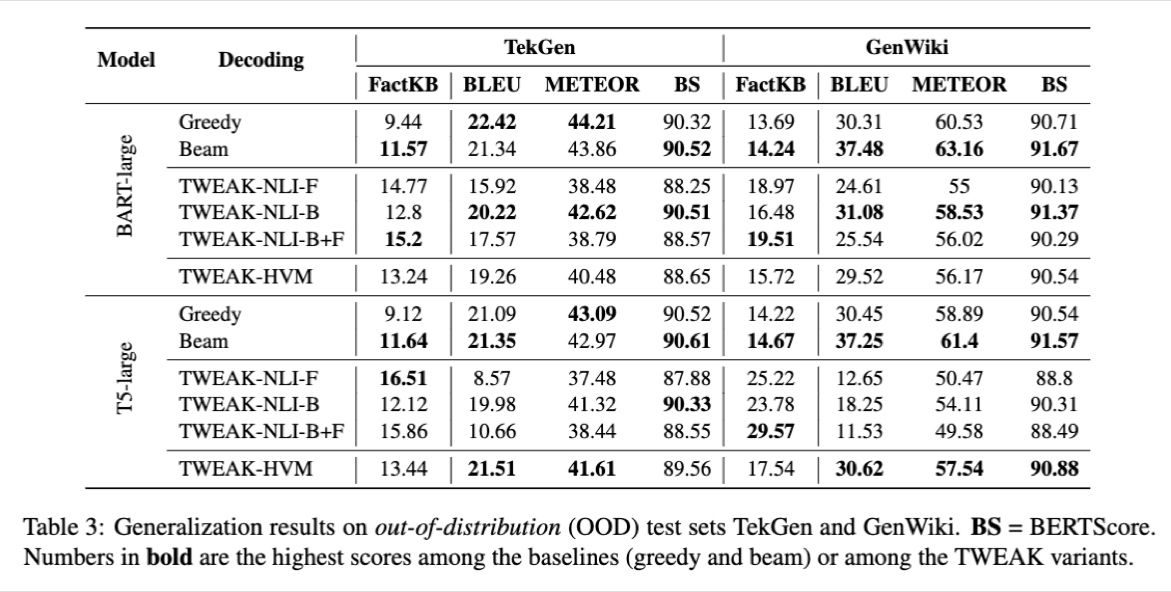

- Using an NLI model for hypothesis verification proves effective. But we further train a task-specific HVM (hypothesis verification model, screenshot 3) with our novel dataset FATE (Fact Aligned Textual Entailment), which results in higher faithfulness, higher similarity with the references, and lower inference cost (screenshot 4 & 5).

The second paper [2] (screenshot 6), to be published in LREC / COLING 2024, described in more details in a previous post [3], concerns how cyclic evaluation can be an effective strategy for evaluating K2T dataset quality in place of unidirectional evaluation strategies that require reference. The paper also constructs a dataset LAGRANGE, which results in models with better qualities among the other distant supervised datasets.

Update (June 2024): the TWEAK paper poster is up at TWEAK at NAACL 2024: Decoding Without Hallucinations.

References

[1] Qiu, Yifu, Varun Embar, Shay Cohen, and Benjamin Han. “Think While You Write: Hypothesis Verification Promotes Faithful Knowledge-to-Text Generation.” 2023. https://arxiv.org/abs/2311.09467

[2] Mousavi, Ali, Xin Zhan, He Bai, Peng Shi, Theodoros Rekatsinas, Benjamin Han, Yunyao Li, Jeff Pound, Joshua Susskind, Natalie Schluter, Ihab Ilyas, and Navdeep Jaitly. “Construction of Paired Knowledge Graph-Text Datasets Informed by Cyclic Evaluation.” 2023. https://machinelearning.apple.com/research/construction-paired-knowledge-graph

[3] Han, Benjamin. Previous post on the LAGRANGE paper. LinkedIn. https://www.linkedin.com/posts/benjaminhan_chatgpt-guanaco-knowledgegraphs-activity-7111945686794833920-L_qZ

Originally posted on LinkedIn.