Generative AI Seeped into Research Peer Reviews

A while ago Wired wrote about how ChatGPT and the other similar generative AI tools are now deployed to mass-produce scammy books flooding online book markets [1]. The story seems straightforward: there’s a profit motive and there’s a tool enabling it, voila.

But that can never happen to ethics-conscious, integrity-driven researchers, can it? It turns out it absolutely can, albeit as of this writing, probably at a smaller scale (?) [2].

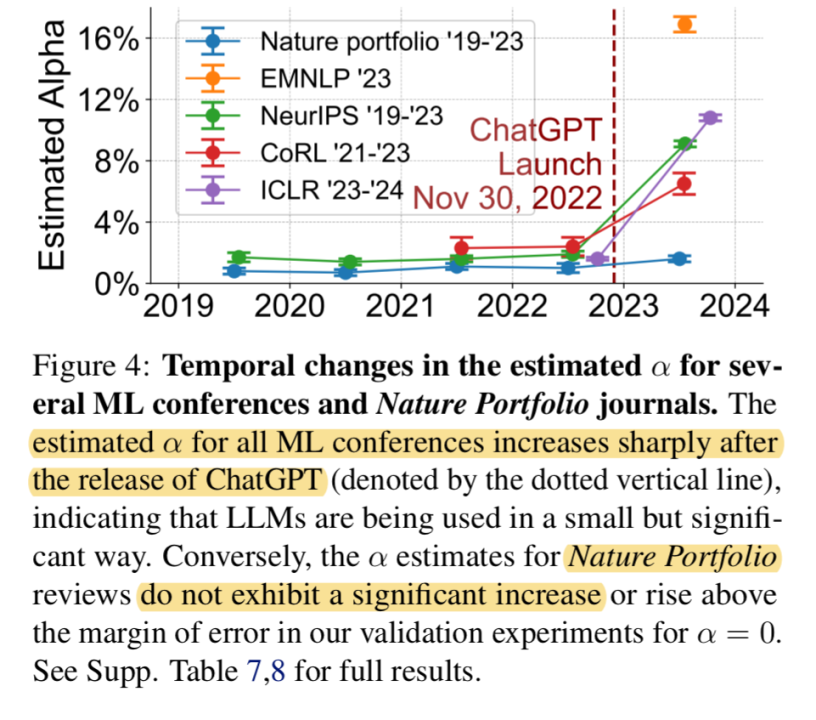

But if authors of research papers do it, the group that should hold them accountable, aka the reviewers, surely would not take the shortcuts? Well, according to a recent paper [3], 10.6% of ICLR 2024 and 16.9% of EMNLP 2023 reviews are significantly modified by AI.

Originally posted on LinkedIn.

References

[1] Kate Knibbs. “Scammy AI-Generated Book Rewrites Are Flooding Amazon.” Wired, January 10, 2024. https://www.wired.com/story/scammy-ai-generated-books-flooding-amazon/

[2] Benjamin Han. “Research Papers Claiming ‘I Am an AI Language Model.’” LinkedIn, 2024. https://www.linkedin.com/posts/benjaminhan_elen-le-foll-elenlefoll-activity-7174624268310261760-eW0M

[3] Weixin Liang, Zachary Izzo, Yaohui Zhang, Haley Lepp, Hancheng Cao, Xuandong Zhao, Lingjiao Chen, Haotian Ye, Sheng Liu, Zhi Huang, Daniel McFarland, and James Zou. “Monitoring AI-Modified Content at Scale: A Case Study on the Impact of ChatGPT on AI Conference Peer Reviews.” 2024. https://arxiv.org/abs/2403.07183

[4] Jordan Boyd-Graber, Naoaki Okazaki, Anna Rogers. “ACL 2023 Policy on AI Writing Assistance.” 2023. https://2023.aclweb.org/blog/ACL-2023-policy/

[5] Anna Rogers, Jordan Boyd-Graber, Naoaki Okazaki. “ACL’23 Peer Review Policies.” 2023. https://2023.aclweb.org/blog/ACL-2023-policy/