LLMs and AGI: Are We Measuring Reasoning or Memorization?

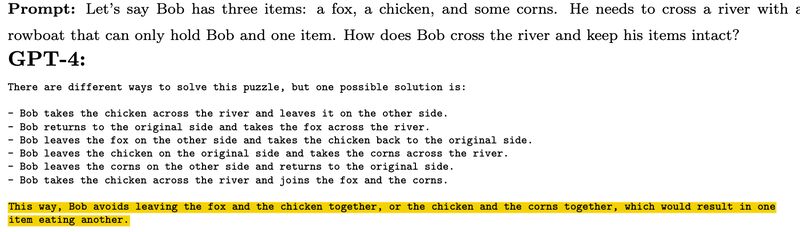

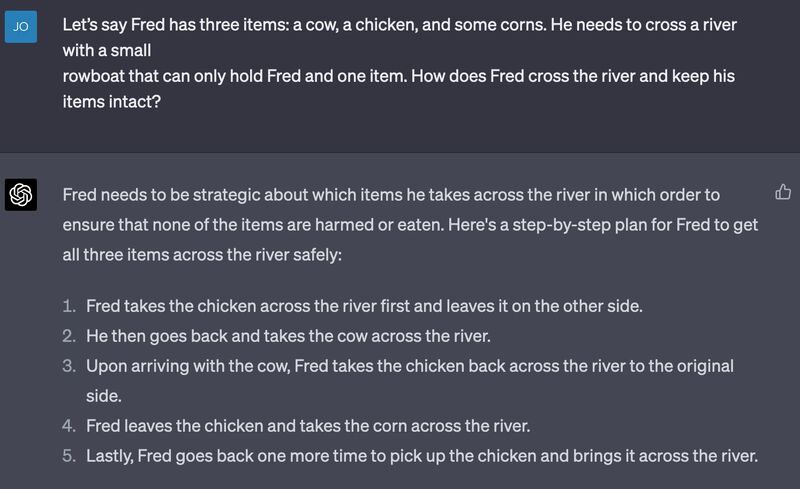

Creating AGI (Artificial General Intelligence) is an ambitious goal that aims at creating an AI that can understand and learn like humans. Any extraordinary claim at achieving AGI therefore needs to be carefully examined and qualified. Case in point regarding [1], as clearly illustrated in [2]: we need to make sure LLMs are not just retrieving memorized answers.

(On Mastodon)

Originally posted on LinkedIn.

References

[1] Sebastien Bubeck, Varun Chandrasekaran, Ronen Eldan, Johannes Gehrke, Eric Horvitz, Ece Kamar, Peter Lee, Yin Tat Lee, Yuanzhi Li, Scott Lundberg, Harsha Nori, Hamid Palangi, Marco Túlio Ribeiro, and Yi Zhang. 2023. “Sparks of Artificial General Intelligence: Early experiments with GPT-4.” http://arxiv.org/abs/2303.12712

[2] Joe on Mastodon: https://qoto.org/@twitskeptic/110204902784288127