Fun with DALL-E 2 and Semantics Leakage

Fun with DALLE2 and semantics leakage! I wonder how much this is the result of language models treating languages more or less like bags of words, or because they are knowledge-challenged.

Royi Rassin, Shauli Ravfogel, and Yoav Goldberg. 2022. “DALLE-2 Is Seeing Double: Flaws in Word-to-Concept Mapping in Text2Image Models.” arXiv [cs.CL]. arXiv. [1].

Abstract:

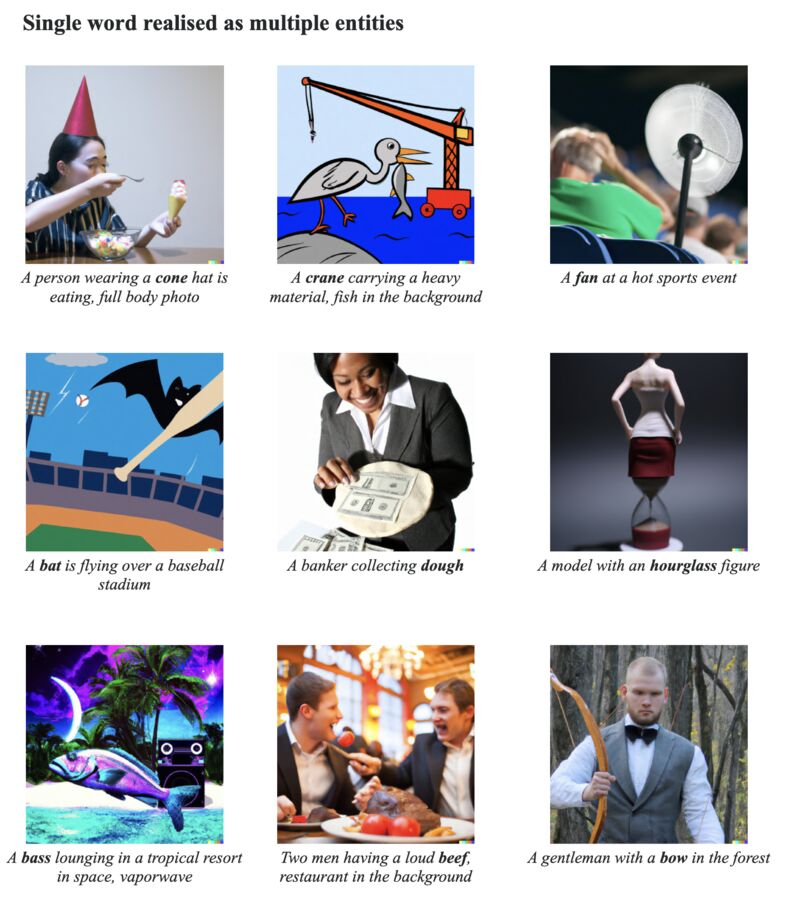

We study the way DALLE-2 maps symbols (words) in the prompt to their references (entities or properties of entities in the generated image). We show that in stark contrast to the way human process language, DALLE-2 does not follow the constraint that each word has a single role in the interpretation, and sometimes re-use the same symbol for different purposes. We collect a set of stimuli that reflect the phenomenon: we show that DALLE-2 depicts both senses of nouns with multiple senses at once; and that a given word can modify the properties of two distinct entities in the image, or can be depicted as one object and also modify the properties of another object, creating a semantic leakage of properties between entities. Taken together, our study highlights the differences between DALLE-2 and human language processing and opens an avenue for future study on the inductive biases of text-to-image models.

Originally posted on LinkedIn.

References

[1] Royi Rassin, Shauli Ravfogel, and Yoav Goldberg. “DALLE-2 Is Seeing Double: Flaws in Word-to-Concept Mapping in Text2Image Models.” arXiv, 2022. http://arxiv.org/abs/2210.10606