IBM Neuro-Symbolic AI Summer School Day 1/2: AMR and MRS (Rademaker, Astudillo)

NLP

NLU

linguistics

knowledge graphs

conference

paper

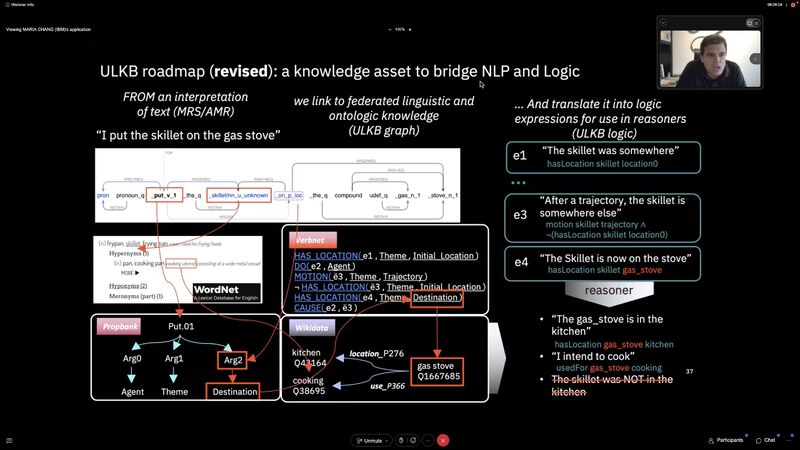

Text → AMR/MRS → ULKB Logic. AMR is robust on 5W, MRS handles multi-quantifier scoping. Latest is transition-based AMR with Structured-BART.

IBM NeuroSymbolicAI Summer School 2022 Day 1/2: AMR (Abstract Meaning Representation) and MRS (Minimal Recursive Semantics) given by Alexandre Rademaker and Ramón Fernández Astudillo:

- Text -> AMR/MRS -> ULKB Logic

- AMR captures 5W robustly, but no more than one quantifier, and no scoping

- MRS takes care of multiple quantifiers and scoping, and closer to ULKB Logic.

- Both AMR parsing and generation have been improving greatly in the past few years.

- Latest is transition-based AMR parsing with Structured-BART.

Young-Suk Lee, Ramón Fernández Astudillo, .Hoang .Thanh Lam, TAHIRA NASEEM, Radu Florian, and Salim Roukos. 2022. “Maximum Bayes Smatch Ensemble Distillation for AMR Parsing.” Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. https://doi.org/10.18653/v1/2022.naacl-main.393.

Summer School site: https://ibm.github.io/neuro-symbolic-ai/events/ns-summerschool2022/

Originally posted on LinkedIn.