Liang on KG + MLM @ NAACL 2022

NLP

NLU

knowledge graphs

foundation models

conference

NAACL

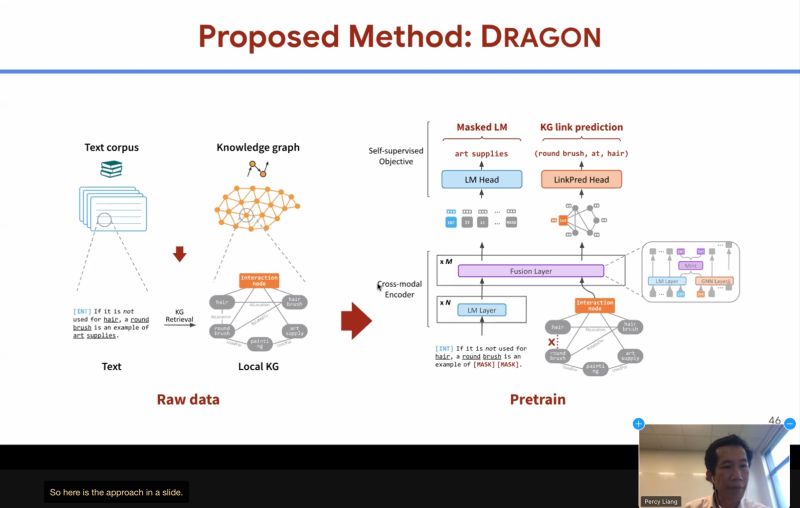

Percy Liang at SUKI: KG link prediction + MLM objective improves foundation models — even on negation, surprisingly.

Professor Percy Liang (Stanford University) gave an invited talk at SUKI Workshop @ NAACL2022 on a concrete example how KnowledgeGraph (KG) can help improve foundation models: apply KG link prediction with the usual MLM (masked language model) objective!

I’m especially intrigued by the BIG jump in performance on negation (the last figure): how would link prediction help there’s no link?

Michihiro Yasunaga is the main contributor.

SUKI Workshop @ NAACL2022: https://suki-workshop.github.io/

Originally posted on LinkedIn.