The Curious Case of Control

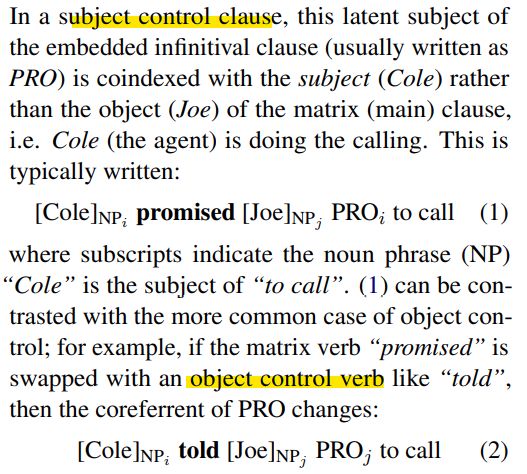

An interesting paper testing if large generative language models should exhibit the same difficulties as English-learning children when they learn about subject control clauses. Surprisingly most of these models show instead more difficulty in learning object control clauses, even when they are more frequent in the training datasets.

In the chart below (zero-shot), T5 behaves quite differently. It totally flunks subject control tests, but completely aces object control tests.

Elias Stengel-Eskin and Benjamin Van Durme. 2022. “The Curious Case of Control.” arXiv [cs.CL]. arXiv. http://arxiv.org/abs/2205.12113.

Abstract: Children acquiring English make systematic errors on subject control sentences even after they have reached near-adult competence (C. Chomsky, 1969), possibly due to heuristics based on semantic roles (Maratsos, 1974). Given the advanced fluency of large generative language models, we ask whether model outputs are consistent with these heuristics, and to what degree different models are consistent with each other. We find that models can be categorized by behavior into three separate groups, with broad differences between the groups. The outputs of models in the largest group are consistent with positional heuristics that succeed on subject control but fail on object control. This result is surprising, given that object control is orders of magnitude more frequent in the text data used to train such models. We examine to what degree the models are sensitive to prompting with agent-patient information, finding that raising the salience of agent and patient relations results in significant changes in the outputs of most models. Based on this observation, we leverage an existing dataset of semantic proto-role annotations (White, et al. 2020) to explore the connections between control and labeling event participants with properties typically associated with agents and patients.

Originally posted on LinkedIn.