NLP Datasets: Reduced, Reused, and Recycled

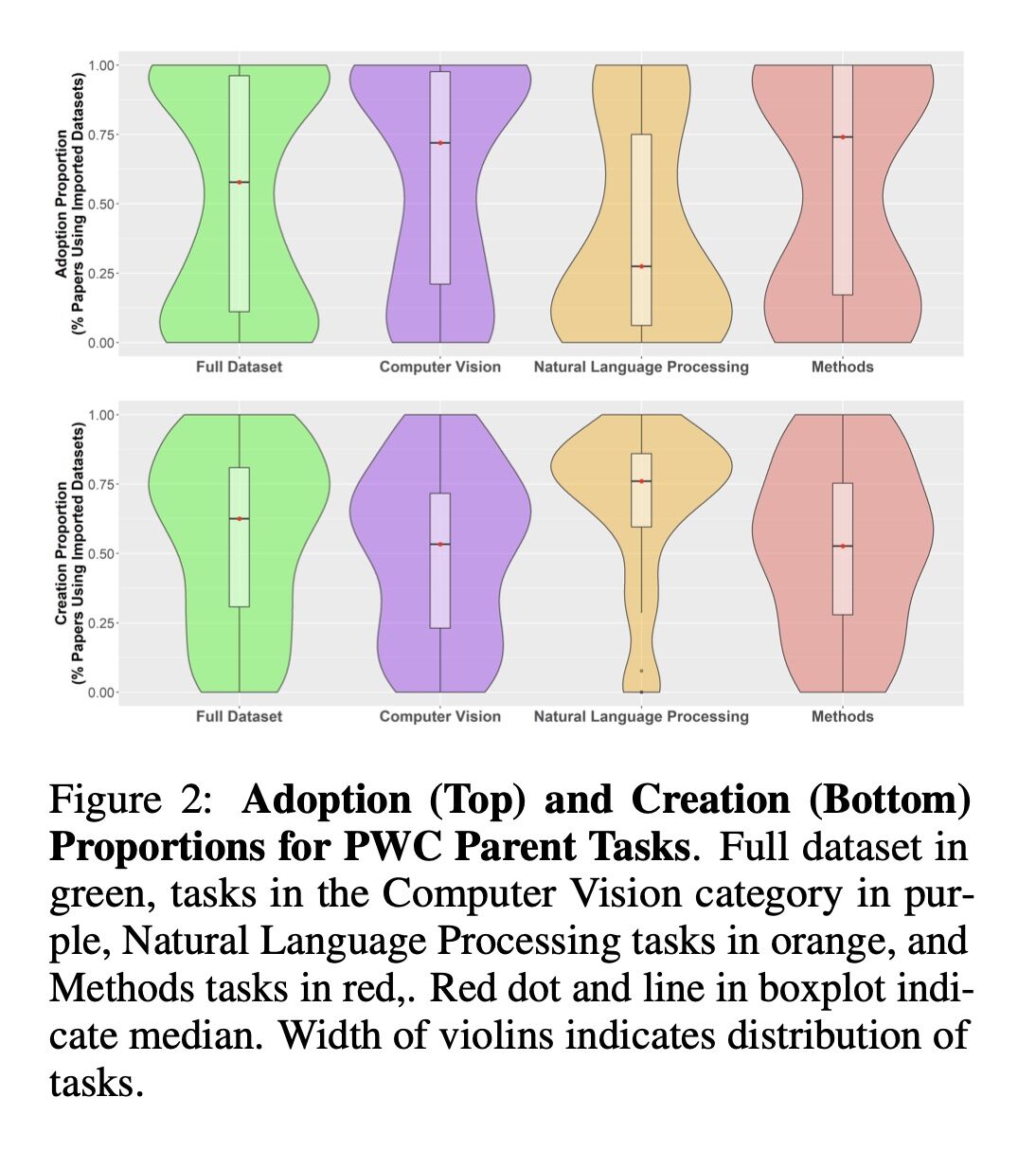

Interesting NeurIPS2021 paper showing that NLP researchers infrequently adopt datasets from other tasks in their studies (half of them do this 27.4% of the time), but frequently create their own datasets for their reported research (more than half at 76.0%). Contrast this to Computer Vision, where the numbers are 71.9% and 53.3%, respectively. You would think NLP datasets are harder to create, no?

And among for-profit organizations who create datasets for ML research, Microsoft comes out on top in terms of their dataset popularity in papers surveyed from Paper with Code (PWC).

Bernard Koch, Emily Denton, Alex Hanna, Ph.D., and Jacob G. Foster. 2021. “Reduced, Reused and Recycled: The Life of a Dataset in Machine Learning Research.” arXiv [cs.LG]. arXiv. http://arxiv.org/abs/2112.01716.

Abstract: Benchmark datasets play a central role in the organization of machine learning research. They coordinate researchers around shared research problems and serve as a measure of progress towards shared goals. Despite the foundational role of benchmarking practices in this field, relatively little attention has been paid to the dynamics of benchmark dataset use and reuse, within or across machine learning subcommunities. In this paper, we dig into these dynamics. We study how dataset usage patterns differ across machine learning subcommunities and across time from 2015-2020. We find increasing concentration on fewer and fewer datasets within task communities, significant adoption of datasets from other tasks, and concentration across the field on datasets that have been introduced by researchers situated within a small number of elite institutions. Our results have implications for scientific evaluation, AI ethics, and equity/access within the field.

Originally posted on LinkedIn.